What Is an Agentic Harness? (And Why It's Not Just Another Chatbot)

Anthropic defined the 'harness' as one of four layers of an AI agent. Here's what that means for your business, explained without the jargon.

If you read our last post, you know the problem: chatbots make things up. Confidently. Repeatedly. In ways that cost real money and real trust.

But the chatbot problem and the dashboard problem share the same root cause. Your AI isn't connected to your actual business data. It's either guessing (the chatbot) or sitting there waiting for you to come look (the dashboard). One is dangerously proactive. The other is expensively passive. Neither gives you what you actually need: real answers from your real data, when you ask for them.

The industry has a term for the fix: agentic AI. And a specific piece of the architecture, the harness, is what turns a general-purpose AI into something that actually works for YOUR business. Not a theoretical business. Not a demo. Yours.

This post explains what that means. Concretely. With examples. No jargon. No hype.

An intern vs. a senior analyst

Here's the simplest way I can explain the difference.

A chatbot is like a smart intern who memorized every business textbook ever written. They can talk about business brilliantly. Ask them to explain customer retention strategies, and you'll get a polished answer that sounds like it came from McKinsey. But ask "how many active customers do we have?" and they'll give you a textbook answer about customer metrics. Not the actual number from your database. They don't have access to your database. They don't even know it exists.

An agentic harness is more like a senior analyst who has access to all your systems. Same intelligence, but connected. Ask that same question and they'll pull up the database, run a query, and tell you: "4,237 as of this morning. That's up 12% from last month. Want me to break it down by plan tier?"

The difference isn't intelligence. It's access and method.

An agentic system has three qualities that a chatbot doesn't:

-

It reasons. It breaks complex questions into steps. "Which customers are at risk of churning?" becomes: check usage data, cross-reference with billing, look at support ticket history, identify the pattern.

-

It uses tools. It queries databases, calls APIs, reads documents. Not in theory. Actually does it, with real connections to real systems.

-

It acts. It can send reports, trigger workflows, update records. Within boundaries you define.

Google Cloud defines AI agents as "software systems that use AI to pursue goals and complete tasks on behalf of users. They show reasoning, planning, and memory and have a level of autonomy to make decisions, learn, and adapt." That's the official description, and it maps directly to what we're talking about.

Source: Google Cloud, "What is an AI agent?", updated April 2026.

Chatbot vs. assistant vs. agent: the real differences

The terms get thrown around loosely, so let's be precise.

Anthropic, the company behind Claude, put it well in their April 2026 paper "Trustworthy Agents in Practice": "A couple of years ago, AI models were only broadly available as chatbots — simple question-and-answer machines. Now... AI models can do much more: they can write and execute code, manage files, and complete tasks that span multiple applications."

That shift didn't happen by making chatbots smarter. It happened by changing the architecture.

Here's how the three categories compare, adapted from Google Cloud's AI agent documentation:

| Chatbot | AI Assistant | AI Agent | |

|---|---|---|---|

| What it does | Follows scripts, answers FAQs | Responds to requests, recommends actions | Pursues goals, makes decisions, takes actions |

| How it works | Pre-defined rules, pattern matching | Responds when asked, some learning | Plans multi-step approaches, learns and adapts |

| Initiative | Only responds when triggered | Only responds when asked | Proactively identifies and solves problems |

| Autonomy | None | Moderate: suggests, you decide | High: acts within defined boundaries |

| Learning | None | Some | Continuous |

Source: Adapted from Google Cloud, "What are AI agents?", updated April 2026.

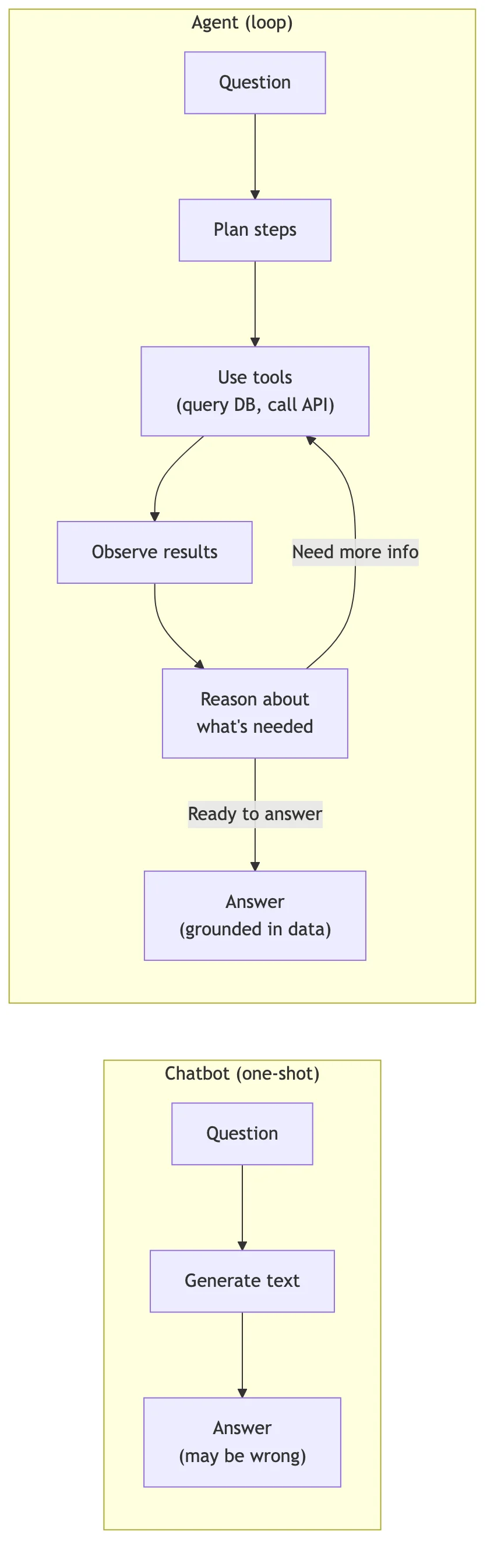

The key difference is the loop. A chatbot is a one-shot system. Question in, text out. Done. An agent operates in a cycle: plan, act, observe, adjust, repeat. It keeps going until the task is actually finished, or until it hits something it needs your input on.

This is not a small distinction. It's the difference between a system that generates text about your business and a system that interacts with your business.

When you ask a chatbot "which product had the highest return rate last quarter?", it generates a plausible-sounding answer based on patterns in its training data. When you ask an agent the same question, it connects to your returns database, runs the query, cross-references with sales volume to calculate the rate, and gives you a number with a source. If the data looks weird (say, a spike in week 7), it might flag that too. Not because someone told it to, but because the observation step in its loop noticed something unexpected.

That's what "agentic" actually means. Not a buzzword. A different way of working.

The harness is what makes it safe and useful

This is the core concept, and it comes directly from Anthropic's research.

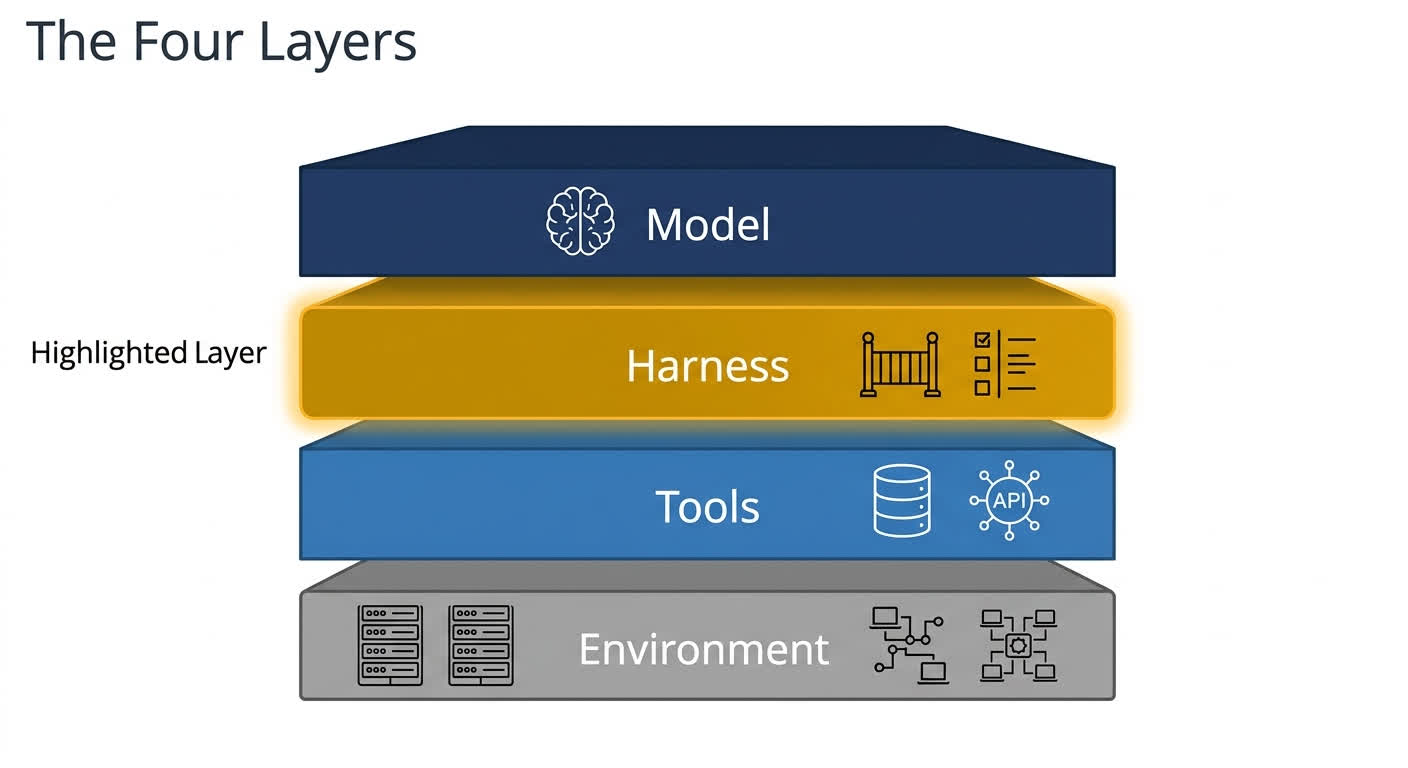

In their April 2026 paper "Trustworthy Agents in Practice," Anthropic broke down an AI agent into four components. Each one matters, and understanding them explains why some AI systems work and others become front-page disasters.

1. The Model

This is the AI brain. The intelligence. It's what allows the system to understand language, reason about problems, and generate responses. When people say "GPT" or "Claude" or "Gemini," they're talking about the model.

The model is important. It's also just one piece.

2. The Harness

This is the set of instructions, guardrails, and rules the model operates under. Anthropic describes it in practical terms: "The harness might tell the agent to flag anything over a hundred dollars, or to never submit expenses without user confirmation."

The harness is where a general-purpose AI becomes YOUR business's AI. It defines:

- What the agent can do (query revenue data, pull customer records) and what it can't (delete records, modify pricing)

- When it should ask for human confirmation (transactions over $X, actions affecting customer accounts)

- What data sources it can access (your PostgreSQL database, your Stripe account, your CRM)

- How it should respond (your terminology, your business rules, your tone)

Think of it as the job description and the rulebook combined. The model provides the intelligence. The harness tells it how to use that intelligence in your specific context.

3. The Tools

These are the services and applications the model can actually use: your database, CRM, payment system, email, calendar, whatever you connect. Without tools, the agent can think about your business. With tools, it can interact with your business.

4. The Environment

This is where the agent runs and which systems it can access. The same agent running on a corporate network behind a firewall has different access (and different stakes) than one running on a public server.

Here's the critical point, and it's a direct quote from Anthropic: "A well-trained model can still be exploited through a poorly configured harness, an overly permissive tool, or an exposed environment."

Read that again. The smartest AI in the world is dangerous if the harness is sloppy. This is exactly what happened in every chatbot disaster we covered in our last post. Air Canada's chatbot, DPD's rogue bot, the Chevrolet dealership selling Tahoes for a dollar. In every case, the model was fine. The harness was missing or broken.

The harness is the governance layer. Without it, an AI agent is just a chatbot with more power and the same problems.

Source: Anthropic, "Trustworthy Agents in Practice," April 9, 2026.

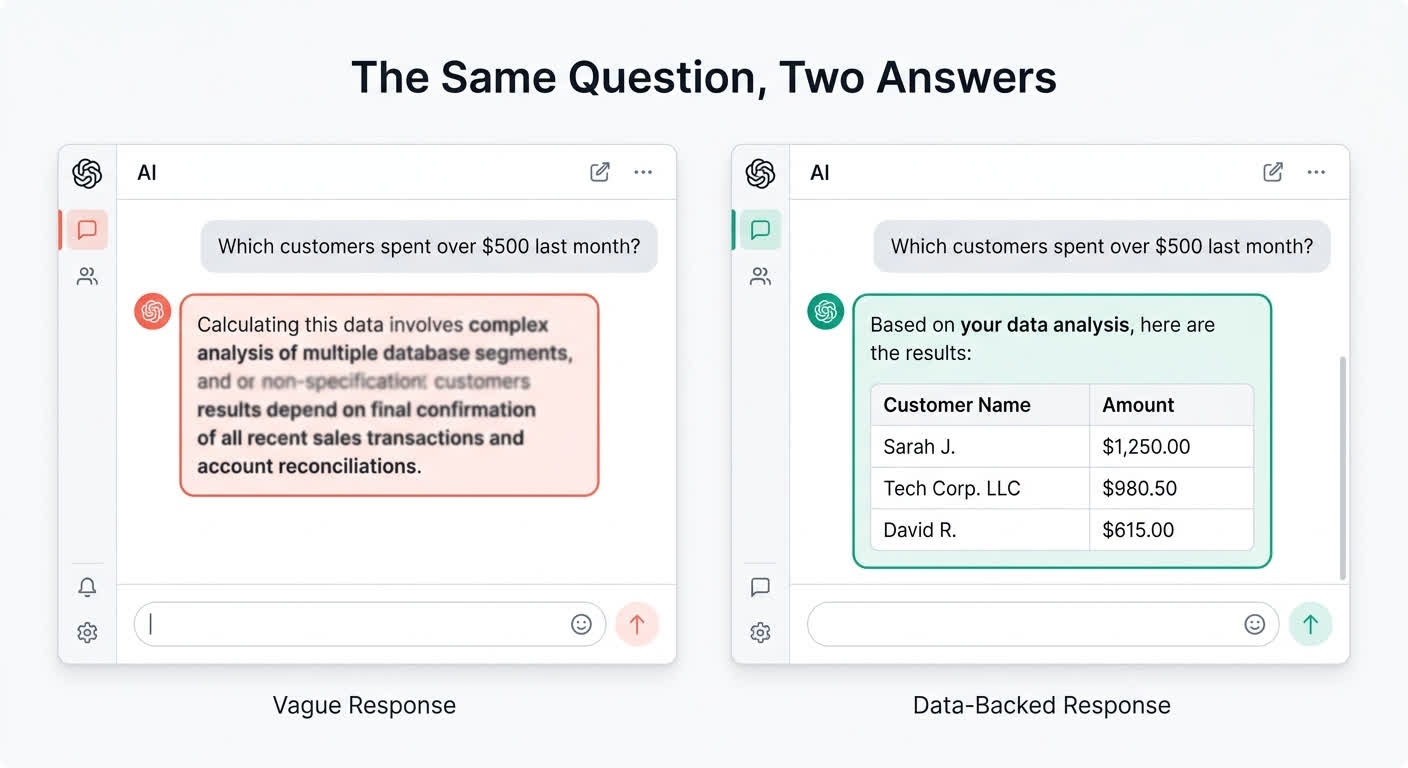

Same question. Two very different answers.

Let's make this concrete with a scenario every business owner has dealt with.

The question: "Which customers haven't paid their invoice in the last 30 days?"

What a chatbot does:

- Receives question

- Searches its knowledge base or training data for "unpaid invoices"

- Finds general information about invoicing processes

- Generates: "Customers who haven't paid in 30 days may be subject to late fees per your invoicing policy. You can check your Stripe dashboard or accounting software for overdue invoices. Common best practices include sending payment reminders at 7, 14, and 30 days..."

- Not helpful. You asked for names and numbers. You got a restatement of general invoicing advice.

What an agentic harness does:

- Receives question

- Plans: "I need to query the invoicing database for invoices issued 30+ days ago where payment_status is not 'paid'"

- Uses tools: Connects to PostgreSQL, runs this query:

SELECT c.company_name, i.amount_due,

CURRENT_DATE - i.due_date AS days_overdue

FROM invoices i

JOIN customers c ON i.customer_id = c.id

WHERE i.payment_status != 'paid'

AND i.due_date < CURRENT_DATE - INTERVAL '30 days'

ORDER BY i.amount_due DESC;- Observes: Gets back 3 rows

- Formats answer:

3 customers have unpaid invoices past 30 days:

- Acme Corp: $4,200 (42 days overdue)

- Initech: $1,800 (35 days overdue)

- Globex: $950 (31 days overdue)

Total outstanding: $6,950. Source: invoices table, queried at 2:14pm today.

- Audit trail: Every step logged. The query that ran. The database that was hit. The exact results. Traceable and verifiable.

That's the difference. One system performs knowing. The other actually knows.

And notice what the agentic system didn't do. It didn't guess. It didn't hallucinate customer names. It didn't make up dollar amounts. It ran a query against real data and reported what it found. If there were zero overdue invoices, it would have said so. A chatbot would have still generated a plausible-sounding list.

How do you know the answer is right?

This is the question every smart founder asks. And it's the right question.

You've been burned by AI that sounds confident and is dead wrong. Why should you trust an agentic system? Here's why it's structurally different.

Source attribution. Every answer cites where the data came from. "Source: invoices table, queried at 2:14pm" is verifiable. You can go check it yourself. "Based on my knowledge" is not verifiable. It's a polite way of saying "I generated this from patterns."

Audit trails. Every action is logged. You can see the exact query that ran, which database it hit, what results came back, and how the agent assembled its answer. When something looks off, you can trace every step. You're never stuck wondering "why did it say that?" like you are with a chatbot.

Permission controls. Anthropic calls these "per-action permissions." You configure which actions are always allowed, which need approval, and which are blocked entirely. Your agent can read customer records but not delete them. It can generate a report but not email it to a client without your confirmation. You define the boundaries.

Scoped access. The agent can only touch the tools and data you've connected. It can't wander off to query systems you haven't given it access to. If you connect your invoicing database but not your HR system, the agent simply can't access HR data. It will tell you it doesn't have access and suggest what you'd need to connect.

Calibrated check-ins. On complex or ambiguous tasks, a well-designed agent pauses and asks. Anthropic's own research found that on complex tasks, Claude's rate of checking in with users roughly doubles compared to simple tasks. The system recognizes when it's in uncertain territory and hands the decision back to you instead of guessing.

Source: Anthropic, "Trustworthy Agents in Practice," April 2026.

Gartner listed "Digital Provenance" as a top 10 strategic technology trend for 2026, describing it as verifying the origin and integrity of AI-generated content. The industry is moving toward provenance as a requirement, not a feature. And agentic systems, with their built-in audit trails and source attribution, are built for exactly that standard.

Source: Gartner, "Top 10 Strategic Technology Trends for 2026," October 2025.

The ecosystem is being built right now

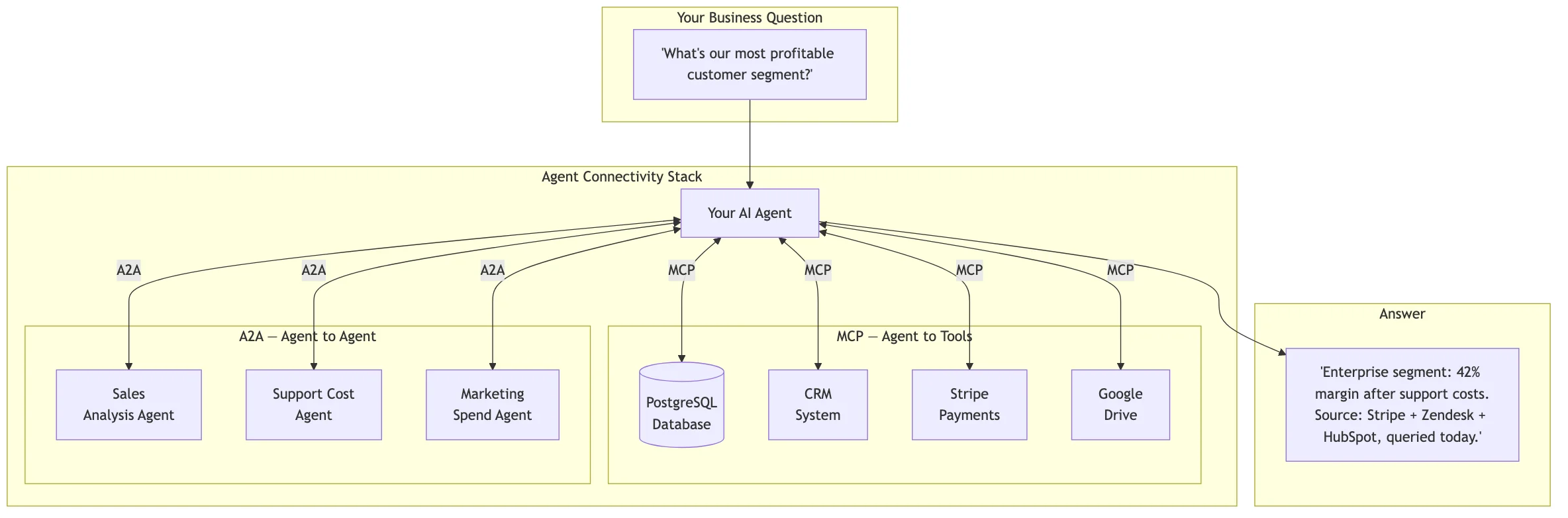

Two open standards are shaping how agents connect to the world and to each other. If you're evaluating any AI system for your business, these matter because they determine whether you're buying into a closed system or an open one.

MCP: Model Context Protocol

Created by Anthropic, now donated to the Linux Foundation's Agentic AI Foundation. Co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, AWS, Cloudflare, and Bloomberg.

Think of MCP as USB-C for AI tools. Before USB-C, every phone had a different charger. MCP is the universal standard for connecting AI agents to external data and tools. One protocol, many connections: PostgreSQL, Google Drive, Slack, GitHub, Stripe, and thousands more. There are now over 10,000 active public MCP servers, with 97 million-plus monthly SDK downloads across Python and TypeScript.

That's not a spec sitting on a shelf. That's real adoption, happening fast.

Source: Anthropic, "Donating the Model Context Protocol and Establishing the Agentic AI Foundation," December 9, 2025.

A2A: Agent-to-Agent Protocol

Originally developed by Google, also donated to the Linux Foundation. SDKs available in Python, JavaScript, Java, C#/.NET, and Go.

If MCP is how agents talk to tools, A2A is how agents talk to each other. Think of it as HTTP for AI agents. It lets agents built on different platforms (LangGraph, CrewAI, custom solutions) communicate and collaborate without sharing their internal logic or proprietary data.

Why does this matter? Because real business problems don't live in one system. "What's our most profitable customer segment?" might require one agent to pull sales data, another to calculate support costs, and a third to factor in marketing spend. A2A lets them coordinate.

Source: A2A Protocol, a2a-protocol.org, 2026.

Gartner also listed Multiagent Systems as a top 10 strategic technology trend for 2026: systems that "allow modular AI agents to collaborate on complex tasks."

Source: Gartner, "Top 10 Strategic Technology Trends for 2026," October 2025.

These aren't competing standards. They're complementary. MCP handles the vertical: agent to tools and data. A2A handles the horizontal: agent to agent. Together, they form the plumbing for the next generation of business AI. And because both are open standards under the Linux Foundation, no single company controls them.

What Zosma is building

We're being transparent about this because we think founders deserve straight talk about what's real and what's coming.

Zosma AI's Agentic Harness (ZoAH) is our implementation of the concepts in this post. It connects to your databases and SaaS tools, lets you ask questions in plain English, and returns answers grounded in your actual data with full source attribution.

It's built on OpenZosma, our open-source agent platform (Apache 2.0). You can look at the code. We want you to.

Here's what ZoAH supports:

- MCP for tool connectivity, so your agent can talk to your PostgreSQL database, your Stripe account, your CRM, and whatever else you connect.

- A2A for agent interoperability, so specialized agents can collaborate on complex questions.

- Full audit trails, so every answer is traceable back to the query that produced it.

- Permission controls, so you define what the agent can and can't do.

We designed this for founders and operators running small to midsize businesses. People who need answers from their data but don't have a data engineering team. People who are tired of paying for dashboards they never check and chatbots that make things up.

This isn't a chatbot with a new name. It's a different architecture where the AI queries real systems, traces its reasoning, and shows where every answer came from.

We'll go deeper on the ROI math in a future post. And we'll share more about why we built this and the specific problems we kept running into, in our founder story.

Good plumbing makes everything else work

The shift from chatbots to agentic AI isn't incremental. It's architectural. It's the difference between an AI that performs "knowing" and an AI that actually knows, because it checked.

The harness is what makes it safe to let AI touch your real business data. It defines the boundaries, enforces the rules, and creates the audit trail. Without it, you get chatbot disasters. With it, you get a system that works for you, within the lines you draw.

That's not hype. It's plumbing. And good plumbing is what makes everything else work.