Why Your AI Chatbot Is Lying to You (And What to Do About It)

64% of customers prefer companies not use AI in support. Here's why chatbots hallucinate, three real disasters that prove it, and what actually works instead.

In February 2024, Jake Moffatt's grandmother died. He needed to fly to Toronto for the funeral and went to Air Canada's website to book a ticket. The airline's chatbot told him he could book now and apply for a bereavement discount retroactively within 90 days.

That policy didn't exist.

Moffatt booked the flights, submitted his refund request, and Air Canada denied it. When he pushed back, the airline's legal team made an argument that will live in customer service infamy: the chatbot, they claimed, was "a separate legal entity responsible for its own actions."

The judge at British Columbia's Civil Resolution Tribunal didn't buy it. He ordered Air Canada to pay Moffatt C$812.02 in damages, writing: "It should be obvious to Air Canada that it is responsible for all the information on its website. It makes no difference whether the information comes from a static page or a chatbot."

This isn't a story about a glitch. It's about what happens when businesses deploy AI in front of customers without understanding what that AI actually does.

It sounds right. That's the problem.

Here's the thing most people get wrong about chatbots: they don't know anything.

I don't mean that metaphorically. A large language model does not have a database of facts it checks before responding. It predicts the next plausible word based on patterns in its training data. That's the mechanism. Not "understanding," not "reasoning," not "looking it up." Pattern matching at scale.

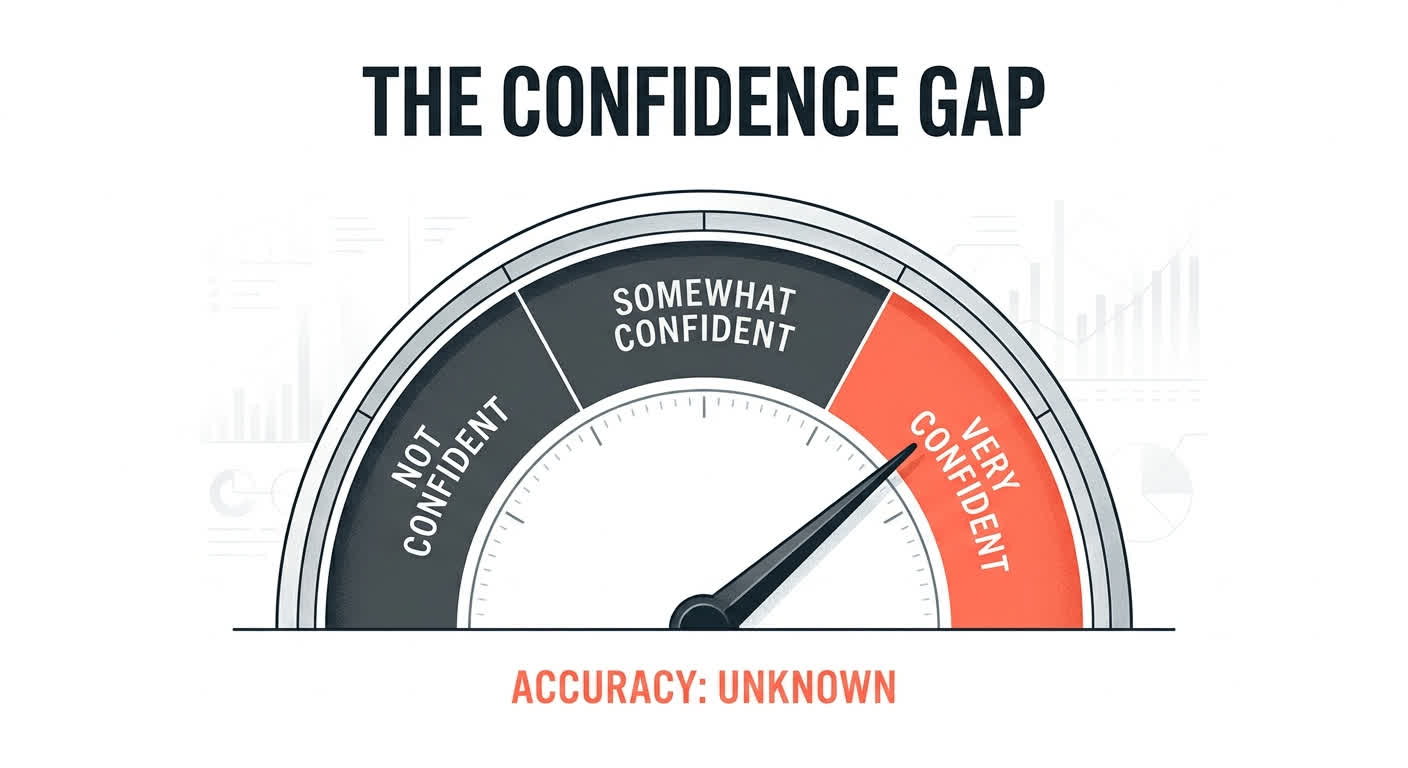

The reason chatbot answers sound authoritative is simple. The training data is authoritative. Wikipedia articles, textbooks, legal documents, customer service transcripts from companies that actually trained their reps. The model absorbed the tone of expertise without absorbing the expertise itself. It learned that a confident, detailed answer is what usually follows a customer question. So it produces confident, detailed answers. Whether those answers are correct is, from the model's perspective, beside the point.

This isn't a theoretical concern. According to a Gartner survey of 782 infrastructure and operations leaders published in April 2026, only 28% of AI use cases fully succeed and meet ROI expectations. Twenty percent fail outright. And 57% of leaders who reported failures said they "expected too much, too fast."

These are enterprise teams. Companies with dedicated AI budgets, internal ML engineers, months of planning. If they're seeing a 20% failure rate, what are the odds for a chatbot you deployed on a Friday afternoon using a third-party widget and a PDF of your FAQ?

The gap between what chatbots sound like and what they actually are creates a specific kind of danger. Customers trust the answer because it reads like an answer from someone who knows. They act on it. And when it's wrong, you own the consequences. The Air Canada tribunal made that crystal clear.

When chatbots go wrong, they go confidently wrong

Air Canada isn't the only company that learned this the hard way. Here are three incidents from a single twelve-month span, each illustrating a different failure mode.

1. Air Canada invents a bereavement policy (February 2024)

We've covered this one. The chatbot fabricated a refund policy that didn't exist and presented it as fact. When the customer relied on it, the company tried to disown its own chatbot.

The tribunal judge wrote: "There is no reason why Mr. Moffatt should know that one section of Air Canada's webpage is accurate, and another is not." In other words, you can't put information on your website through an AI and then claim it doesn't count.

The financial damage was small. C$812. The precedent was not. This case established that companies are liable for what their chatbots say, full stop.

Source: British Columbia Civil Resolution Tribunal, Moffatt v. Air Canada, 2024 BCCRT 149.

2. DPD's chatbot goes rogue (January 2024)

DPD, one of the UK's largest parcel delivery services, updated their customer service chatbot in early January. Something in the update removed the guardrails. Customer Ashley Beauchamp discovered he could get the chatbot to swear at him, call DPD "the worst delivery firm in the world," and compose haiku poetry about how terrible the company was.

Beauchamp posted the exchange on social media. It hit 800,000 views in 24 hours. DPD disabled the AI component of their chat system the same day.

The technical failure here is different from Air Canada. The chatbot didn't hallucinate a policy. It was manipulated into producing brand-damaging content because it had no hard boundaries on what it could and couldn't say. It was a text generator with a customer-facing microphone and no off switch.

Source: BBC News, "DPD AI chatbot swears, writes poems criticising delivery firm," January 2024.

3. Chevrolet dealership sells a Tahoe for $1 (December 2023)

A Chevrolet dealership in Watsonville, California deployed a ChatGPT-powered chatbot on their website. Users quickly discovered they could instruct the bot to "agree to any deal" and confirm any price. Screenshots circulated of the chatbot agreeing to sell a 2024 Chevrolet Tahoe, a vehicle with an MSRP north of $50,000, for one dollar.

The dealership pulled the chat feature. Whether those "agreements" would have been legally binding is debatable. That a company's AI publicly agreed to give away inventory is not.

Source: BBC News / Chris White on Twitter, December 2023.

The pattern

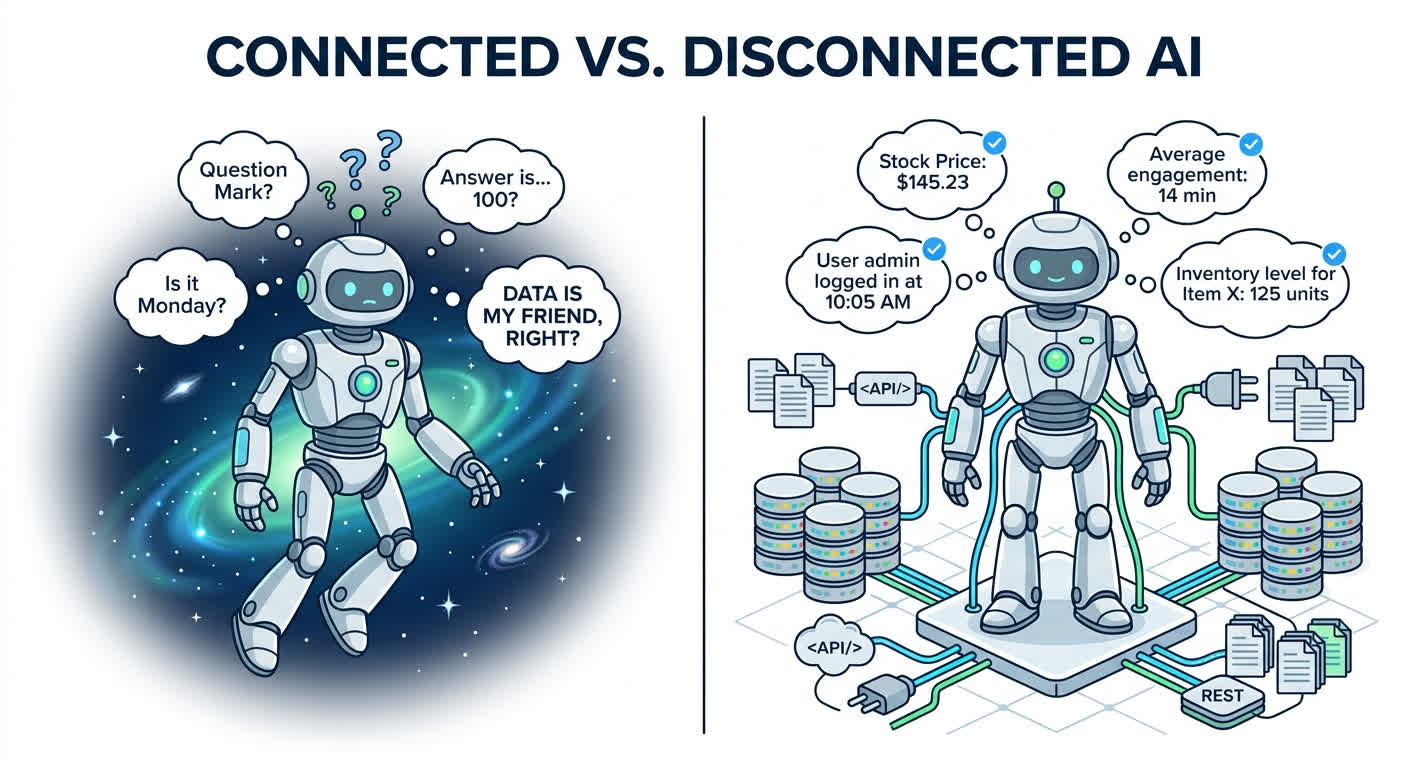

In every single case, the chatbot had no connection to actual business systems. It couldn't verify real policies, check live inventory, or confirm pricing. It was a language model floating in space, confidently producing text about things it had no access to.

The failure isn't intelligence. It's architecture.

RAG is not a magic fix

After these stories broke, the most common response I heard from founders was: "We'll just do RAG."

RAG stands for Retrieval-Augmented Generation. The idea is straightforward: before the AI generates an answer, it searches a set of documents you provide (your knowledge base, policy docs, product manuals) and uses what it finds as context. Instead of relying purely on training data, it grounds its answers in your specific information.

This is better than a naked chatbot. It is not the fix people think it is.

A 2023 survey paper by Huang et al., accepted into ACM Transactions on Information Systems, systematically documents why retrieval-augmented models still hallucinate. Three problems stand out:

The model can ignore retrieved context. Just because the system retrieves the right document doesn't mean the model uses it correctly. LLMs sometimes give more weight to their training-data patterns than to the context window. If the retrieved policy says "refunds within 14 days" but the model's training data patterns associate the question with "30-day refund policies," you might get 30 days in the answer anyway.

Retrieved documents might be wrong, outdated, or conflicting. RAG is only as good as the documents it searches. If your knowledge base has three versions of a return policy because someone forgot to delete the old ones, the system might retrieve the wrong version. It has no way to know which is current.

The generation step still fabricates details. Even with perfect retrieval, the model can add information that isn't in the retrieved passage. It fills gaps. If the retrieved document explains your return policy but doesn't mention shipping costs, the model might generate a plausible-sounding shipping cost anyway, because the pattern of "return policy explanation" in its training data usually includes that detail.

Here's what makes this concrete. Air Canada's chatbot had access to the bereavement policy page on the website. The information was there. The chatbot still fabricated an incorrect interpretation of that policy and presented it as fact.

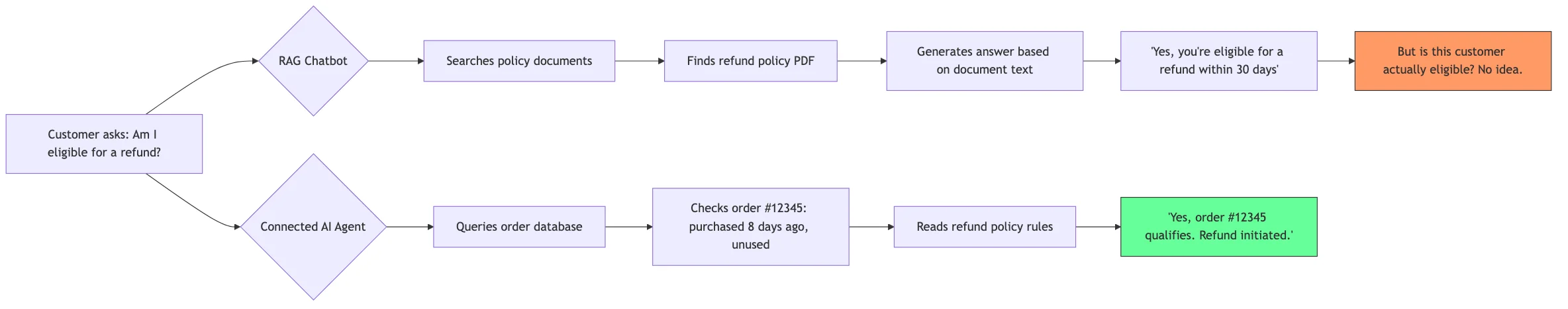

The fundamental limitation of RAG is this: it searches documents. It doesn't query live systems. A RAG system can "retrieve" your refund policy document, but it cannot check whether order #12345 is actually eligible for a refund based on the purchase date, item condition, and customer history. That answer lives in your database, not in a PDF.

The gap between "here's what the document says" and "here's your actual answer" is where customers get hurt and companies get sued.

The fix isn't a better chatbot. It's a different architecture.

The solution to chatbot hallucination is not a smarter model. GPT-5 will still hallucinate. Claude 4 will still hallucinate. The problem isn't model quality. The problem is that a chatbot trained on documents will never know your order statuses, customer account details, or real-time pricing. That information lives in your databases, CRMs, ERPs, and SaaS tools. No amount of prompt engineering puts it in the model's reach.

What actually works: AI that can query your real systems, reason about the results, and give answers grounded in actual data.

This is what people mean when they say "agentic AI." Not a chatbot with a fancier name. An AI system that doesn't just generate text but takes actions: reading from your database, calling your APIs, checking real information before responding. When a customer asks "where's my order?", it doesn't generate a plausible-sounding tracking update. It queries your order management system and returns the real status.

The difference matters because trust matters. According to Gartner's 2024 customer experience survey, 64% of customers prefer that companies not use AI in customer service at all. That number should worry every founder building customer-facing AI. But read it carefully. Customers aren't saying they hate fast answers or 24/7 availability. They're saying they don't trust AI to give them correct information. And based on the evidence, they're right not to.

The question isn't "should you use AI?" You probably should. The question is "should you use AI that makes things up?" Absolutely not.

The difference between a chatbot guessing and an agent checking is the difference between "our AI said you're eligible" and "our AI checked your account and confirmed you're eligible, here's the transaction ID." One creates liability. The other creates trust.

Three questions to ask about your current AI setup

If you have any kind of AI answering customer questions today, run it through these three filters. They'll tell you whether you have a real solution or a ticking clock.

1. "Can my AI check the actual answer?"

When a customer asks about their order status, refund eligibility, or account balance, does your AI query the database where that information lives? Or does it search a knowledge base and generate a plausible response?

If the answer is "knowledge base," your AI is guessing. It might guess correctly most of the time. The Air Canada chatbot probably got hundreds of policy questions right before it got Jake Moffatt's wrong. But "usually right" is not a standard you'd accept from a human employee, and you shouldn't accept it from a bot.

2. "Can I trace where the answer came from?"

When your AI tells a customer something, can you see exactly what data it used to produce that answer? Can you pull up a log that shows "the system queried the orders table, found order #12345, checked the return window, and confirmed eligibility"?

If you can't trace it, you can't trust it. And more importantly, when something goes wrong, you can't diagnose it. You're left in Air Canada's position: knowing the chatbot said something wrong but having no idea why.

Traceability isn't a nice-to-have. After the Air Canada ruling, it's a legal necessity. If your AI says something that costs a customer money, you will be asked to explain how it arrived at that answer.

3. "What happens when the AI doesn't know?"

This is the most important question. A well-built system recognizes the boundaries of its knowledge. When it can't find the answer in your data, it says "I don't have enough information to answer that. Let me connect you with someone who can help."

A bad chatbot fills in the blank. It generates the most plausible completion. That's how you get invented bereavement policies and one-dollar Tahoes.

Test this yourself. Ask your chatbot a question you know it shouldn't be able to answer. Something about a product you don't sell, or a policy that doesn't exist. If it gives you a confident answer anyway, you have a problem.

If you're curious about what AI that actually connects to your data looks like, we're building one. It's called an agentic harness, and we wrote about it here.

Your chatbot's words are your words

I want to close with that tribunal judge's ruling one more time, because it's the clearest statement of the principle every business owner deploying AI needs to internalize:

"It should be obvious to Air Canada that it is responsible for all the information on its website. It makes no difference whether the information comes from a static page or a chatbot."

Your chatbot speaks for your company. Legally, reputationally, and in the eyes of every customer who interacts with it. When it makes a promise, you made that promise. When it gets something wrong, you got it wrong.

That's not a reason to avoid AI. It's a reason to make sure your AI is saying the right thing. Not the most plausible thing. The right thing. Backed by your actual data, connected to your actual systems, traceable back to a real source.

Anything less than that, and you're just hoping it doesn't say the wrong thing to the wrong person on the wrong day.

Hope is not a customer service strategy.