I'm a Solo Founder. I Replaced My Data Analyst with an AI Agent.

I spent 10+ hours a week pulling numbers from Stripe, PostgreSQL, and HubSpot. Here's what happened when I stopped.

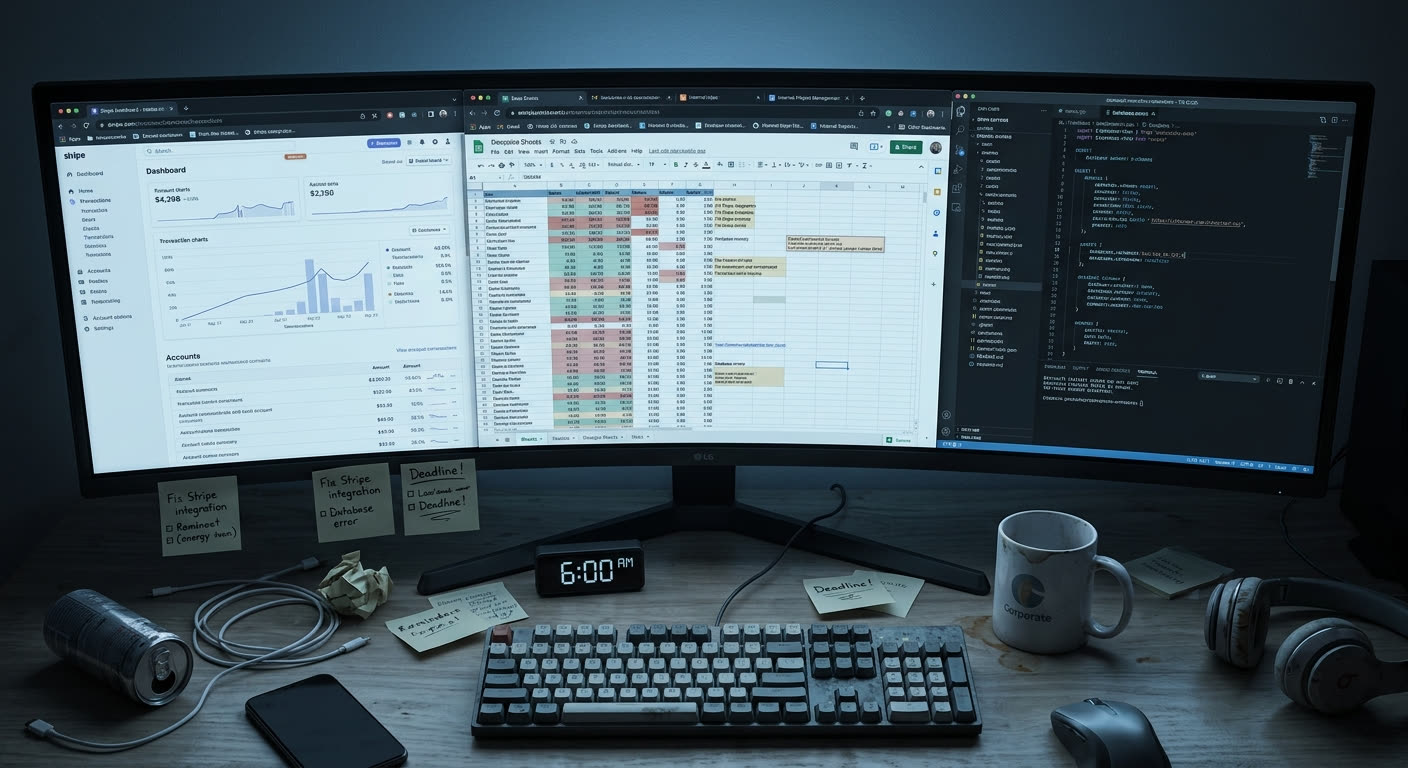

Every Monday at 6am, before I did anything else, I opened Stripe. Then PostgreSQL. Then HubSpot. Then the Google Sheet I hadn't named properly because I kept creating new ones.

Two hours later, I'd have a rough picture of how the previous week went. Revenue, new trials, churn, support tickets, pipeline. I'd paste the numbers into a Slack message to myself because that was faster than finding last week's spreadsheet and remembering which column meant what.

This was my ritual for over a year.

The low point came on a Tuesday morning when an advisor asked me a simple question during a call: "What's your current churn rate?" I knew the number was somewhere. I'd calculated it the previous Friday. But I couldn't remember if it was in the Sheet called "Q3 Metrics," the one called "Weekly Numbers (NEW)," or the one called "Copy of Weekly Numbers (NEW) (2)." I told him I'd follow up by end of day.

After the call, I Googled my own churn rate. Typed the company name into Google along with "churn rate" hoping maybe I'd mentioned it in a tweet or a blog post. That's when I realized something was deeply broken.

This isn't a success story. Not yet, anyway. It's the honest version of what happened when I stopped being my own data analyst.

The data tax

I tracked my time for three weeks. Not because I'm disciplined, but because I was trying to justify hiring someone and needed proof for myself.

Here's what the numbers looked like:

- Monday morning data pull: 2 hours. Opening each tool, exporting, copying, reconciling.

- Investor update prep (biweekly, averaged): 1 hour per week. Pulling the same numbers again, but in a slightly different format.

- Ad-hoc questions throughout the week: 30 minutes per day. "How many trials signed up since the feature launch?" "What was the conversion rate on that landing page?" "Did that enterprise lead ever respond?" Each question meant opening 1-3 tools and doing arithmetic.

- Friday summary: 2 hours. Basically Monday's ritual again, but looking forward instead of backward.

Total: roughly 10.5 hours a week. On data.

That's more than a full workday every week spent measuring the business instead of running it. And the output wasn't even good. It was a rough sketch. Good enough to not be flying completely blind, but nowhere near the kind of analysis that actually changes decisions.

The quality problem was worse than the time problem. Stripe showed one revenue number. My spreadsheet showed another. The difference was $340. I spent 20 minutes tracking down the discrepancy: one included a partial refund from mid-month, the other didn't. Twenty minutes to reconcile a $340 difference that didn't change any decision I was going to make.

The moment that stung the most: a potential advisor I was trying to impress asked, "What's your LTV:CAC ratio?" I had the data. I had Stripe for revenue, HubSpot for acquisition costs, PostgreSQL for cohort retention. The inputs existed. But combining them into a single ratio would have taken me an hour of export-match-calculate. I said "give me a day." He said "sure." The pause in his voice said something else.

A number that should take 10 seconds took 24 hours. That's the data tax.

The breaking point

The breaking point wasn't gradual. It was a specific meeting.

I was presenting to two advisors. Informal, not a board meeting, but people whose opinion I cared about. I had three numbers for monthly revenue on three different surfaces: $48,200 in Stripe, $47,400 in my spreadsheet, and $49,100 on a slide in my pitch deck.

None of them were wrong, exactly. Stripe showed gross revenue including a subscription that started mid-month. The spreadsheet used net revenue after refunds but was missing two days because I'd pulled the data on the 29th. The pitch deck used an annualized run rate that I'd rounded up to the nearest hundred.

One advisor looked at the other. "Which number is the real one?"

I said all of them, depending on definition. Which was true and also entirely unhelpful.

That evening I wrote down a single sentence on a sticky note: "I don't have a data problem. I have an integration problem." The data existed. It lived in perfectly good systems. The problem was that no single place combined them into a coherent picture without me manually doing it every time.

I briefly considered hiring a data analyst. I looked at salary ranges. For someone competent enough to handle Stripe API exports, write SQL, and build reports in a BI tool, I was looking at $60,000 to $80,000 per year. My entire annual runway was barely north of that. Hiring was not the answer. Not at this stage.

What I tried first (and why it didn't work)

I'm not someone who jumps to building things. I spent three months trying existing solutions first.

Zapier. I connected Stripe to Google Sheets. New charge fires, row gets added. Worked beautifully for about two weeks. Then an edge case broke it: a customer upgraded mid-billing-cycle, which generated a proration event that Zapier didn't handle the way I expected. My spreadsheet showed the upgrade as two separate charges. Revenue was double-counted for that customer until I caught it manually six days later. I fixed the Zap. A week later, a different edge case. I fixed that one too. After the third break I realized I was just building a fragile pipeline that needed constant babysitting.

Metabase. I spent a Saturday setting it up, connecting it to my PostgreSQL database. The charts were genuinely pretty. I felt productive. But Metabase only talked to my database. Stripe data wasn't in there in real time. HubSpot wasn't in there at all. So I still had Metabase open in one tab and Stripe and HubSpot in two others. I'd replaced 3 tabs with 4 tabs.

Custom Python script. This one I was actually proud of. Pulled from the Stripe API, joined with database queries, output a clean summary. It ran every Monday at 5am via a cron job. For one glorious week I woke up to a perfectly formatted email with all my numbers. Then Stripe deprecated a field name in a minor API update. The script broke at 5am on a Monday. I spent an hour debugging a data pipeline before my first meeting. I was now a solo founder who was also a part-time DevOps engineer for a reporting script nobody used but me.

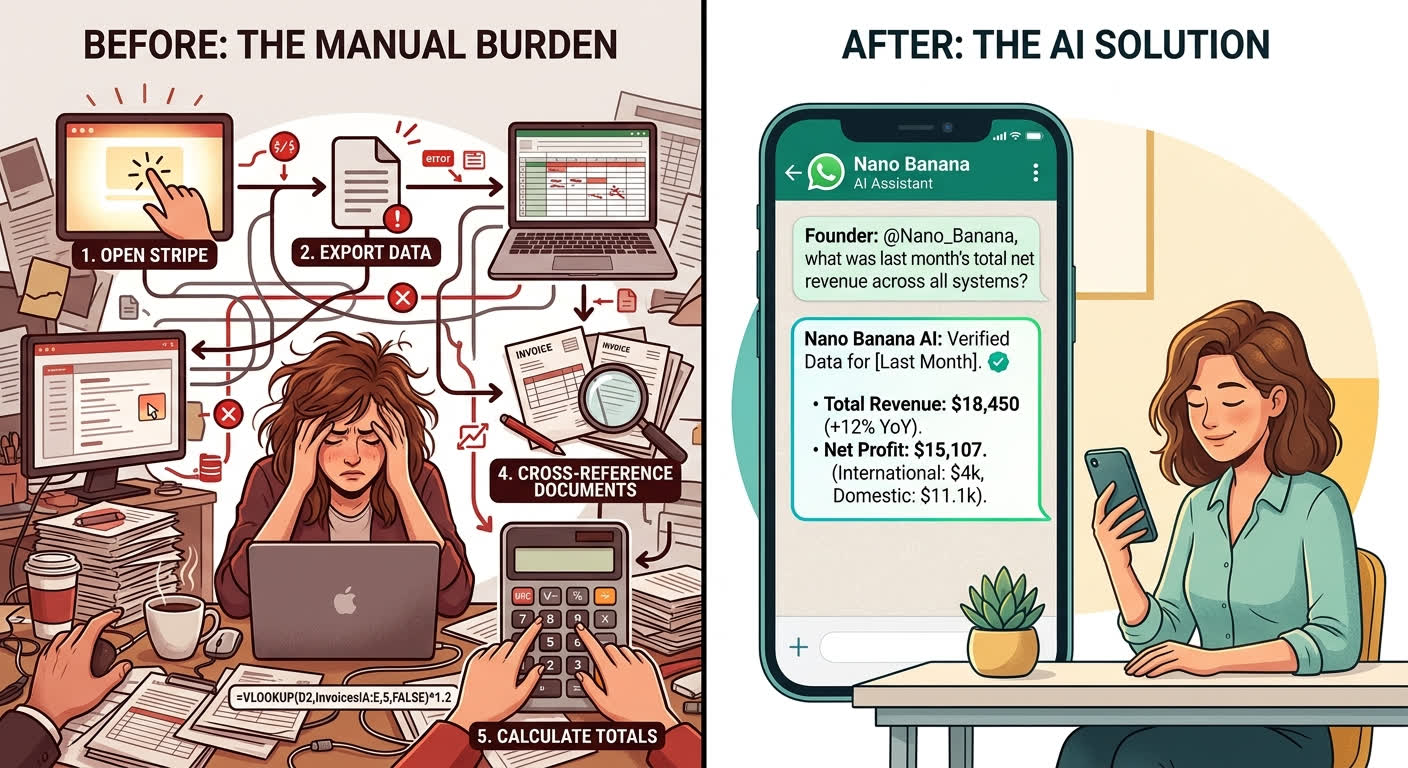

The pattern was clear. Each tool solved one piece of the problem and created a new problem. Zapier couldn't handle edge cases. Metabase couldn't cross systems. The Python script couldn't maintain itself. And all of them required me to define the exact output format up front, which meant I still couldn't ask ad-hoc questions without writing new code or new Zaps.

Then I found the agentic approach

I don't remember the exact day, but sometime in late 2024 I read Anthropic's "Building Effective Agents" paper. It described something that clicked immediately: the idea that an AI agent isn't a chatbot. It's a system that can reason about a question, decide which tools to use, query those tools, and assemble an answer from real data.

That was different from everything I'd tried. Not automation, which is rigid and breaks on edge cases. Not dashboards, which are passive and require you to know what to look at. Not chatbots, which are disconnected from real data and make things up.

An agent could sit between me and my systems. I'd ask a question in plain English. It would figure out which system to query, run the query, and come back with an answer grounded in actual data. If it needed to combine Stripe and PostgreSQL data, it would do both. If the question was ambiguous, it could ask me to clarify instead of guessing.

I built the first version in a weekend. It did exactly one thing: connected to my PostgreSQL database and answered questions in English. No Stripe integration. No HubSpot. Just the database.

I asked it: "How many new users signed up last week?"

It wrote a SQL query, ran it, and came back: "47 new users signed up between March 4 and March 10. That's up from 38 the previous week."

Fourteen seconds. The answer I would have gotten by logging into the admin panel, navigating to the users table, setting the date filter, and counting rows. Except I didn't do any of that. I typed a question and got a number.

That was enough to know this was the right direction.

What it actually looks like now

The interface is WhatsApp. I message the agent the same way I'd message a colleague. No dashboards. No logins. No special app.

Here's what real conversations look like:

Me: How did MRR change this week?

Agent: MRR increased 2.8% week-over-week, from $51,400 to $52,840. Breakdown: 6 new subscriptions added $2,190 in new MRR. 2 churns lost $750. Net new MRR: $1,440. The largest new subscription was Hendricks & Co at $890/mo (Enterprise plan). Source: Stripe billing data, queried at 6:42am.

Me: Which trial users haven't converted after 14 days?

Agent: 8 trial users are past the 14-day mark without converting:

- Sarah Chen (signed up Mar 28, last active Apr 6)

- Marcus Webb (signed up Mar 26, last active Mar 31)

- Priya Iyer (signed up Mar 29, last active Apr 8)

- ... [5 more with names and dates]

3 of these haven't logged in for 5+ days. Want me to pull their usage stats? Source: PostgreSQL users table + Stripe subscription status, queried at 7:01am.

Me: Compare this month's support tickets to last month

Agent: April (through the 13th): 34 tickets, avg resolution time 4.2 hours. March (full month): 89 tickets, avg resolution time 5.1 hours. Projected April total at current pace: ~80 tickets, which would be a 10% decrease. Top category this month: billing questions (12 of 34). Last month's top category was onboarding issues (28 of 89), which dropped to 6 this month — likely related to the onboarding flow update shipped March 22. Source: HubSpot tickets + internal changelog, queried at 7:03am.

Here's what happens under the hood. The agent receives my question, determines which data sources it needs (PostgreSQL, Stripe API, HubSpot, or some combination), connects to those systems, runs the appropriate queries, assembles the results, and returns a plain-English answer with the source cited. Every answer includes what was queried and when. I can see the exact SQL or API call if I want to verify.

My morning routine now: I send 3-4 questions on WhatsApp while making coffee. Takes about 90 seconds. By the time I sit down at my desk, I know how the business is doing. No tabs. No spreadsheets. No reconciliation.

And the ad-hoc questions throughout the day — the ones that used to cost 30 minutes each — now take 15 seconds. "Did anyone from the Acme Corp trial log in this week?" Type, send, answer.

What it can't do

This section matters more than the one before it. If I only told you the good parts, you'd be right not to trust me.

It can't do novel analysis. The agent finds what I ask for. It doesn't proactively tap me on the shoulder and say "hey, I noticed a weird pattern in your trial conversions this week." It's reactive, not curious. If I don't think to ask the question, the insight stays buried in the data. I still need to bring the judgment about what questions matter.

It can't do stakeholder communication. It gives me numbers. Precise, sourced, fast numbers. But my investor update isn't just numbers. It's narrative. It's "here's what the numbers mean and here's what we're doing about it." The agent can hand me the ingredients. Cooking is still my job.

It can't do data strategy. It can't tell me what I should be tracking. If I've never measured activation rate because I never defined what "activated" means in my product, the agent can't help. It answers questions about existing data. Deciding what data matters is a human problem.

It's bad at fuzzy judgment. "Is this customer happy?" isn't a query. Happiness is a vibe. The agent can tell me their usage dropped 40% and they filed two support tickets last week, and I can infer they're probably not thrilled. But the inference is mine. The agent deals in facts, not feelings.

It can't create data that doesn't exist. If I don't track something, no amount of clever querying will produce it. I learned this the hard way when I asked "which blog post drove the most signups?" and the agent told me it couldn't answer because I wasn't passing UTM parameters on my signup form. Correct answer. Frustrating answer.

So what is it actually great at? Speed. Consistency. Cross-system queries that used to take 20 minutes of tab-switching. Being available at 6am on a Monday without complaining. Giving the same answer to the same question every time, unlike my spreadsheets, which gave different answers depending on which version I opened.

It's a very good analyst who never sleeps, never gets the numbers wrong, and never brings strategic insight to the table. That trade-off is worth understanding clearly before you decide it's for you.

The math

Here's what changed in concrete terms.

Time before: ~10.5 hours per week on data tasks. Time after: ~30 minutes per week. Mostly spent on follow-up questions and the occasional "wait, why is that number different from what I expected?" investigation.

Weekly savings: 10 hours. Annual savings: ~500 hours.

At a founder's opportunity cost — and I'll use $100/hour, which is conservative for someone who should be selling, building product, and talking to customers — that's $50,000 per year in recovered time.

The cost of the AI agent approach is a fraction of a single hire. Even at the upper end, we're talking about a few hundred dollars a month in API costs and infrastructure, not $60,000-$80,000 in salary plus benefits plus management overhead plus the three months it takes to onboard someone into your data stack.

But the non-financial return is harder to quantify and honestly more valuable. I make decisions faster because I can check assumptions in 15 seconds instead of 15 minutes. I don't dread Monday mornings anymore. I go into advisor meetings with one set of numbers instead of three. I stopped creating new spreadsheets.

That last one might sound trivial. It's not. Every spreadsheet I created was an admission that the last system I built wasn't working. I haven't created a new one in four months.

That's worth more than the hours.

Why I'm building this for other founders

I didn't set out to build a company around this. I set out to stop spending my Monday mornings in spreadsheets.

But after I built it for myself and it worked, I started talking to other founders. Turns out the Monday morning ritual is nearly universal. The tab-switching, the copy-pasting, the Googling your own metrics because you can't find the right spreadsheet. Everyone does it. Nobody talks about it because it feels like something you should have figured out by now.

You haven't figured it out because the tools aren't built for how solo founders actually work. BI platforms assume you have a data team to configure them. Zapier assumes your workflows are predictable. Dashboards assume you know which chart to look at before you have the question.

If you want to understand the technical architecture behind what makes this work — how an AI agent connects to real systems without hallucinating — we wrote a detailed explainer: What Is an Agentic Harness?. And if you want the hard numbers on when an AI agent makes financial sense versus hiring a human analyst, that's coming next: The $50,000 Question: AI Agent vs. Hiring a Data Analyst.

If you're a solo founder spending your time being your own data analyst, you don't have to. The data is already in your systems. You just need a better way to ask.